Leading Scholars On AI Bias Mitigation Strategies

Artificial intelligence is pretty much everywhere these days, and it’s helping with everything from medical research to job recruiting. But as AI becomes more important, so do the conversations around bias. Those unfair patterns can sneak into algorithms, leading to discrimination or unequal outcomes. Fortunately, some of the world’s top scholars are working on new strategies to reduce these biases in AI systems. I’m excited to share what I’ve learned about their ideas, approaches, and the latest thinking in this area.

Why AI Bias is a Big Deal

AI bias isn’t just a technical glitch you can fix with a quick patch. These biases show up when AI systems reflect the data they’re trained on, and that data can include historical prejudices or social inequalities. This can lead to unfair hiring practices, problems in healthcare decisions, and much more. Scholars like Dr. Timnit Gebru point out that if left unchecked, these biases can actually reinforce societal problems rather than help solve them. As we trust these systems for important decisions, their flaws have bigger impacts on daily life and social systems.

For instance, if a resume screening AI is trained on data from a workplace that’s mainly hired one demographic, it will probably repeat those patterns unless something is done differently. That’s why bias reduction is a key field of research right now. Companies and governments alike are increasingly aware of the risk and the need for in-depth solutions to tackle it.

Get to Know the Leading Scholars in AI Bias Mitigation

There are some really dedicated thinkers pushing the boundaries of what’s possible when it comes to fair AI. Here are a few I’ve found especially influential:

- Dr. Timnit Gebru: Known for her outspoken approach and research at Google and the Distributed Artificial Intelligence Research Institute (DAIR), Dr. Gebru digs into how AI systems impact underrepresented groups and stands behind ethical standards in AI development.

- Dr. Joy Buolamwini: As the founder of the Algorithmic Justice League, Dr. Buolamwini studies facial recognition and its inaccuracies, showing how these errors disproportionately affect marginalized communities. Her work has changed conversations around fairness in tech.

- Dr. Suresh Venkatasubramanian: A computer science professor and respected voice on the use of algorithms in public policy, Dr. Venkatasubramanian has contributed to guidelines that help governments deploy fairer automated systems.

- Dr. Cynthia Dwork: With a background in mathematics and computer science, Dr. Dwork pioneered approaches to measuring and reducing bias mathematically, which has shaped the way companies and researchers design fair algorithms.

There are many others contributing to the field, but these names consistently come up in discussions about making AI better for everyone.

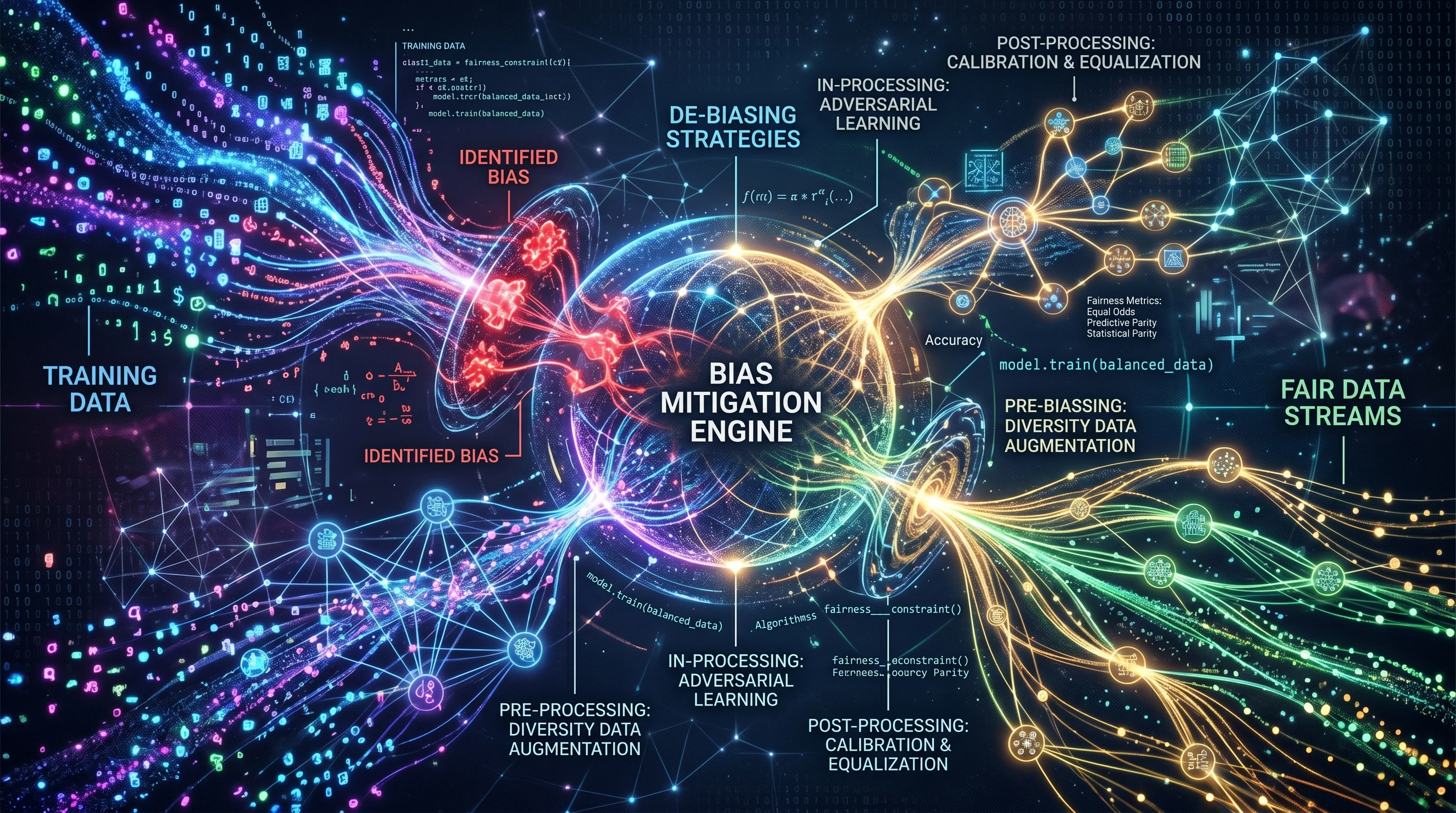

Key Bias Mitigation Strategies from Top Researchers

Every researcher seems to have a slightly different approach, depending on what kind of AI system they’re looking at, and what risks they’re trying to reduce. Here are the main strategies I see referenced the most:

- Data Auditing and Curation: Dr. Gebru and her peers recommend looking closely at where your training data comes from, checking for missing groups, and making sure labels are accurate and diverse. If your data is skewed, your AI will be too.

- Fairness Algorithms: Dr. Dwork’s research has led to practical tools for measuring bias and enforcing positive constraints on algorithms. For example, ‘demographic parity’ is a goal that tries to ensure AI predictions don’t differ between groups unless there’s a genuine reason for it.

- Inclusive Testing: Dr. Buolamwini showed how important it is to actually test AI systems across different groups before deployment, instead of afterwards. Her method included evaluating facial recognition software on faces of many skin tones to see where errors popped up.

- Transparency and Documentation: Leaders like Dr. Gebru encourage public documentation—or “model cards” and “datasheets for datasets”—that explains how data was collected, what an AI model was trained for, and where it might not do well. This transparency helps both researchers and users to check if the technology they are using fits their needs and expectations.

Breaking Down the Basics & Why Bias Happens in AI

Understanding why AI bias happens is the first step to fixing it. AI models are built by learning from huge data sets, but these data sets often come from real life, which means they reflect real-life flaws and imbalances. For instance, training medical diagnostic AI only on data from certain hospitals can miss out on symptoms or outcomes in other populations.

Other common reasons for AI bias include:

- Not enough variety in training data

- Poorly chosen features, like using zip codes in loan applications, which can reflect neighborhood inequalities

- Lack of transparency in model decisions, hiding where bias may be lurking

These issues show up across many sectors, from healthcare to finance to criminal justice. As these technologies grow fast, researchers everywhere are searching for better solutions.

Practical Steps for Reducing AI Bias? Insights from Scholars

Experts emphasize that this isn’t something you fix with just one tool. Here are practical recommendations from top scholars that anyone building or using AI can start with:

- Collect Diverse Data: Make sure your training set is balanced, regularly updated, and includes a wide range of real-world cases. For example, Dr. Buolamwini recommends taking deliberate steps to source data from underrepresented groups.

- Audit Regularly: Check your algorithms and their outcomes periodically using fairness metrics, not just accuracy. Dr. Dwork suggests using tools designed for this; many open source options exist now.

- Involve Different Perspectives: Collaborative design sessions with affected communities, as advocated by Dr. Gebru, help spot issues you might not otherwise see. This is especially important for algorithms used in schools, government, or policing.

- Be Transparent: Publish documentation and limitations, especially for public-facing algorithms. Transparency helps people understand when an AI tool might fail or be unreliable and assists in building trust.

- Try Multiple Fairness Metrics: No single metric tells the whole story. Dr. Venkatasubramanian advises using several metrics together to get a fuller picture of whether an AI model is treating people fairly.

Scholars suggest these actions are just part of a bigger, ongoing process of review and improvement. The field continues to evolve as new challenges and solutions are found by research teams across the globe.

Things to Think About Before Trusting an AI System

Just because a model is trusted with important or “AI-powered” decisions doesn’t mean it’s automatically fair or reliable. Here are some things worth thinking over first:

- Who built the model? Check if the team behind an AI system is open about their funding and priorities. Hidden interests can shape outcomes in subtle ways.

- Where does the data come from? Datasets taken from social media or historical records can carry over real-world prejudices and biases that then affect decisions made by the system.

- What happens if the system makes a mistake? In high-impact applications such as healthcare, policing, or lending, a biased decision can do real damage and have a lasting impact on people’s lives.

- Is there a feedback loop? Some AI systems keep learning based on user behavior, and they can “double down” on initial biases if those aren’t checked regularly. Regular feedback and oversight are essential to keep things in check.

Examples: Where AI Bias Has Been Challenged and Fixed

Facial recognition technology is a great example. Before intensive research from scholars like Dr. Buolamwini, some commercial face recognition software had much higher error rates for women and people with darker skin tones than for men with lighter skin. After these findings were published, several companies put the brakes on using this technology in law enforcement and improved their training sets to make results more even and fair for everyone.

Another area is credit scoring. Traditional credit scores sometimes exclude people who don’t have a long financial history, which can disadvantage young people or immigrants. Work by data scientists and policy experts like Dr. Venkatasubramanian encourages the use of alternative data sources and more open scoring methods to make lending fairer and more inclusive.

Common Challenges When Reducing Bias in AI

Fixing AI bias isn’t always straightforward. Some pitfalls people often run into include:

- Balancing fairness with accuracy. Sometimes making an algorithm more fair can reduce its overall accuracy, and finding the right tradeoff depends on the use case and the stakes involved.

- Changing definitions of “fairness.” There’s no universal meaning, and what seems fair in one context might seem unfair in another. This means ongoing conversation and updates are pretty important.

- Technical and organizational constraints. Sometimes teams simply don’t have enough data, funding, or time to do all the recommended steps, but even small, incremental improvements matter.

Despite these hurdles, more organizations are starting to treat fairness as a core goal, not just a “nice to have.” This shift marks a turning point for both technology companies and the societies they serve.

Advanced Tips? What Leading Scholars Suggest for the Future

Scholars working at the crossroads of technology, ethics, and law are coming up with even more creative ways to spot and tone down bias in AI.

- Continuous Learning: Algorithms should adapt to new data and flag when previous patterns no longer apply. This helps avoid repeating old mistakes and keeps performance fair over time.

- External Audits: Just like with financial audits, third-party checks of algorithms add another layer of transparency and accountability. Some experts are calling for these to become routine in areas that affect lots of people.

- Policy and Regulation: Many leaders call for stronger rules from governments about AI transparency, data rights, and discrimination, so organizations aren’t left guessing what’s okay and what’s not.

- Ethics Boards and Community Feedback: Several scholars suggest setting up independent groups that include community voices, ethicists, and tech experts to keep an eye on major AI deployments and ensure broad perspectives are heard.

As we get into more complex AI uses, these extra checks become even more essential to prevent new kinds of bias from sneaking in down the road.

Frequently Asked Questions About AI Bias and Mitigation

Readers often wonder how to spot bias, what steps are feasible for smaller companies, and if fairness improvements mean losing accuracy. Here are some practical answers based on expert advice:

Question: How do I know if an AI tool is biased?

Answer: Check whether the developers provide fairness metrics or audit reports. Look for open discussions about risks, data sources, and known limitations. Transparency is a positive sign that a tool is being looked at critically.

Question: Can small teams or startups afford to address bias?

Answer: Absolutely. Many open source tools exist for bias detection and testing, and collecting more inclusive data can start as a focused process without requiring a total overhaul.

Question: Does fixing bias always mean less accurate AI?

Answer: Not always. While there are tradeoffs, smart design of fairness improvements often gives a boost to overall trust and can even help accuracy with groups that were not well-served before. The key is to test and learn as you go.

Wrapping Up

Figuring out how to handle bias in AI isn’t a one-time job. It takes ongoing work by researchers, developers, policymakers, and the communities affected by these technologies. The leading scholars I’ve mentioned are paving the way with new ideas and tools, but it’s a field where everyone has something to add. By staying curious, asking questions, and pushing for more transparency and fairness, everyone can help make sure that AI’s benefits are shared widely and justly.

Brainstormer GPT

Your versatile creative ally!

Thank you for questions, shares and comments!

Share your thoughts or questions in the comments below!